I grew up with a C64. A relative gave it to me; at the time, it had started going out of fashion for AOL, Prodigy, and all things online.

I learned to love programming because of it, and it started a lifetime of working with computing systems.

I’ve recently rediscovered VICE. Not only are the C64 and C128 emulated, but the C64 with a Creative Micro Designs SuperCPU is emulated as well. I have access to all the things I wanted as a kid but couldn’t afford, on the computer I already have today on my desk.

So I start up the the emulated C64 with SCPU, and lo and behold, it’s awesome. Programming with BASIC on this is on another level; much more comfortable than my memory of it.

Today’s find: for some reason, doing arithmetic on integer variables is slower than doing arithmetic on the default floating point variable type.

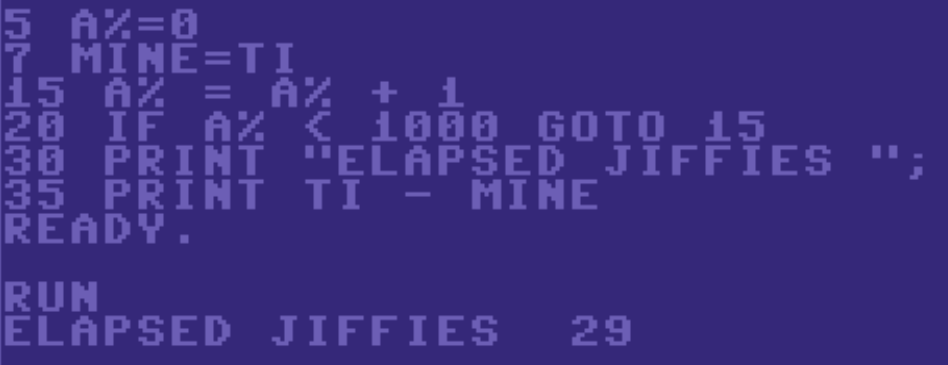

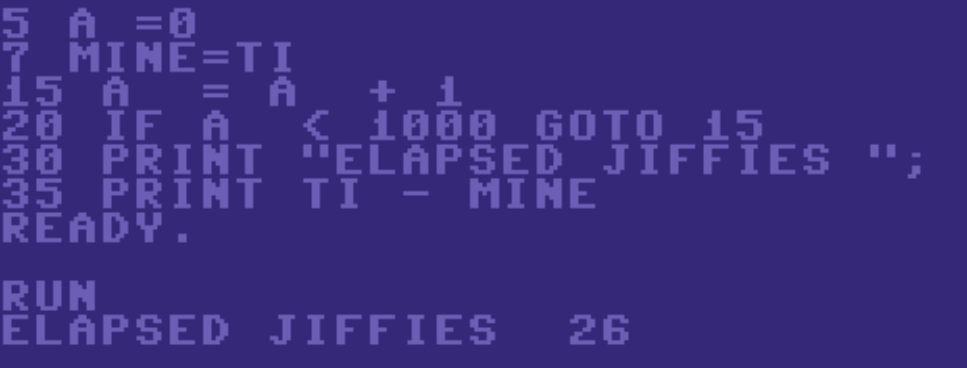

As an example:

The difference is 3/60th of a second, or it takes about 10.3% of the time longer to do this arithmetic on an integer variable than a floating point variable.

Maybe the reason BASIC had these included was for memory savings more than speed. Maybe the floating point version is faster because the BASIC interpreter takes more time just decoding the code.

Surprising to me either way, considering the complexity of performing floating point math on a 6502.

Leave a Reply